Delivering the best possible AI compute performance means optimizing every part of the system for the specific demands of artificial intelligence, including storage.

Following the recent addition of leading storage companies to our Elite Partner Program, we are now publishing our first reference architecture, in partnership with Pure Storage.

The technical guide details how Graphcore users can take advantage of the Pure’s flexible, high-performance FlashBlade technology as part of their IPU-POD configuration.

Both Pure and Graphcore are committed to delivering industry-leading performance, while supporting customers’ AI journeys as they seamlessly transition to next-generation compute systems.

PURE INNOVATION

FlashBlade is an all-flash system, optimized for storing and processing unstructured data, and scalable up to multi-petabyte capacity.

The system is based on five key innovations:

- High performance storage – all-flash system pairing NAND flash with integrated NVRAM.

- Unified network – consolidating high communication traffic over single network, supporting IPv4 and IPv6 client access over Ethernet up to 1.6Tb/s.

- Purity//FB storage operating system – symmetrical operating system running on Flashblade’s fabric modules, balancing client operation requests across blades.

- Common media architectural design for files and objects: single underlying media architecture supports concurrent access to files via NFS, NFS over HTTP, SMB, as well as object storage via Amazon S3 protocol.

- Simple usability - Purity//FB on Flashblade performs routine administrative tasks autonomously, self-tuning and providing component failure alerts.

SEAMLESS INTEGRATION

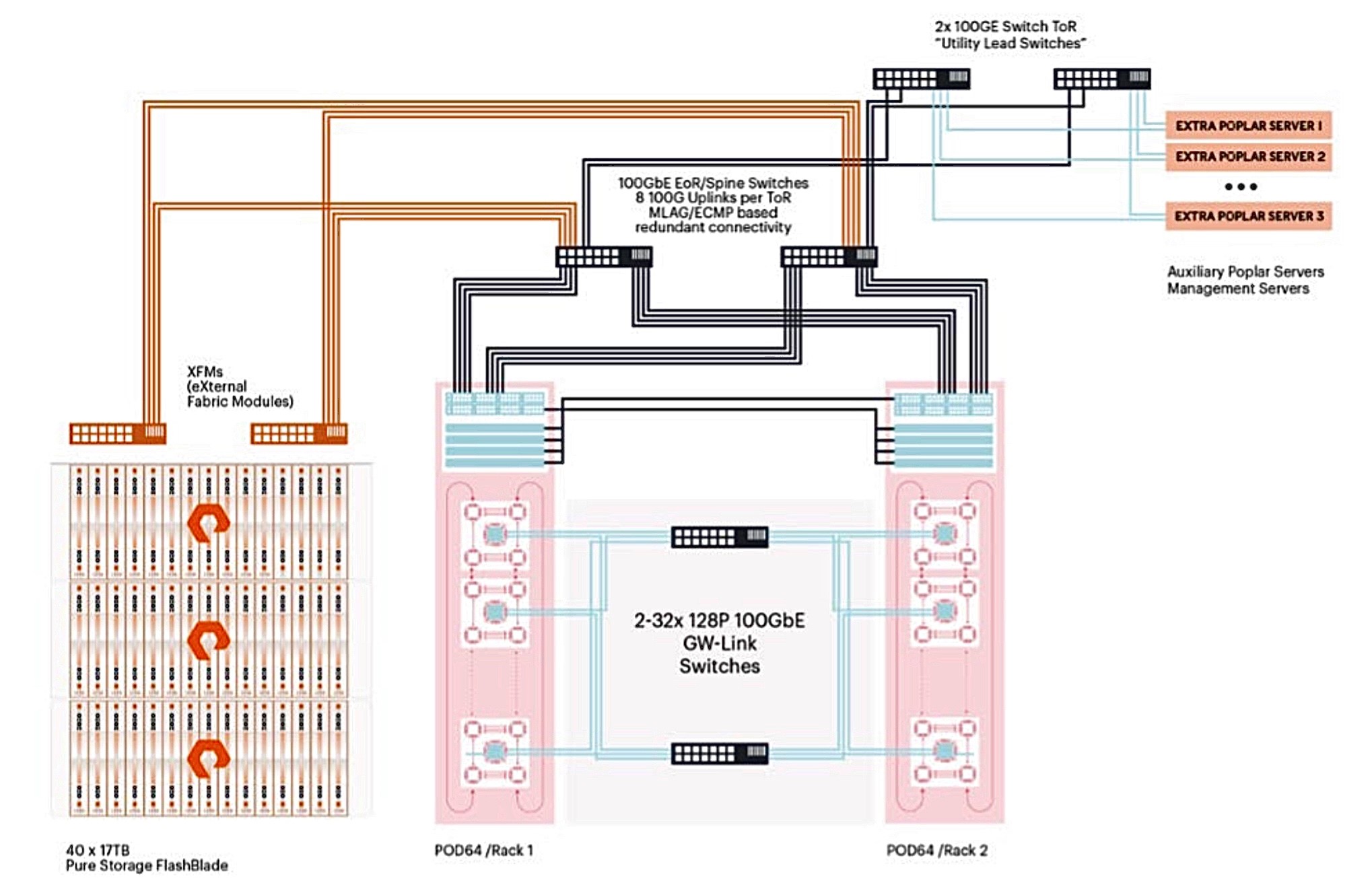

The Pure Storage and Graphcore reference architecture describes the hosts, storage, and networking configuration used in the IPU-POD64 reference architecture featuring the Pure Storage FlashBlade storage solution.

In addition to the IPU-POD configuration detailed in the RA, the FlashBlade storage solution was architected as follows:

- 40 x 17TBFlashBladewith redundant external fabric modules (XFM)

- External fabric modules connected to Arista Leaf Switches via eight100GbE configured as a single LACP/MLAG group

PERFORMANCE RESULTS

As part of the joint reference architecture, Graphcore and Pure benchmarked system performance running ResNet-50 and BERT-Large, along with a number of standardised storage benchmarks for AI workloads.

Near-linear scaling was achieved as we increased the number of jobs run on the infrastructure, while optimal IPU performance was delivered with significant bandwidth capacity remaining on the 40-blade system.

More detail on benchmark performance is available in the reference architecture document.

For more details on Graphcore’s storage partners, read our blog.

To find a channel, technology or ecosystem partner, visit our Partner Program page.