In the Paperspace Gradient Notebook, Text entailment on IPU using GPT-J fine tuning we are using the unifying approach presented in the paper ‘Exploring the limits of transfer learning with a unified text-to-text transformer’, known as the T5 paper.

For more on using GPT-J on Graphcore IPUs see our blog Fine-tune GPT-J: a cost effective GPT-4 alternative for many NLP tasks.

Fine-tuning: Text entailment on IPU using GPT-J

The central idea is that every downstream task every task can be formulated as a text-to-text problem where the generative model is simply asked to to generate the next token.

This being the case, fine-tuning becomes very simple, as there is no need to change the model architecture. Rather you simply train the model autoregressively to generate the next token.

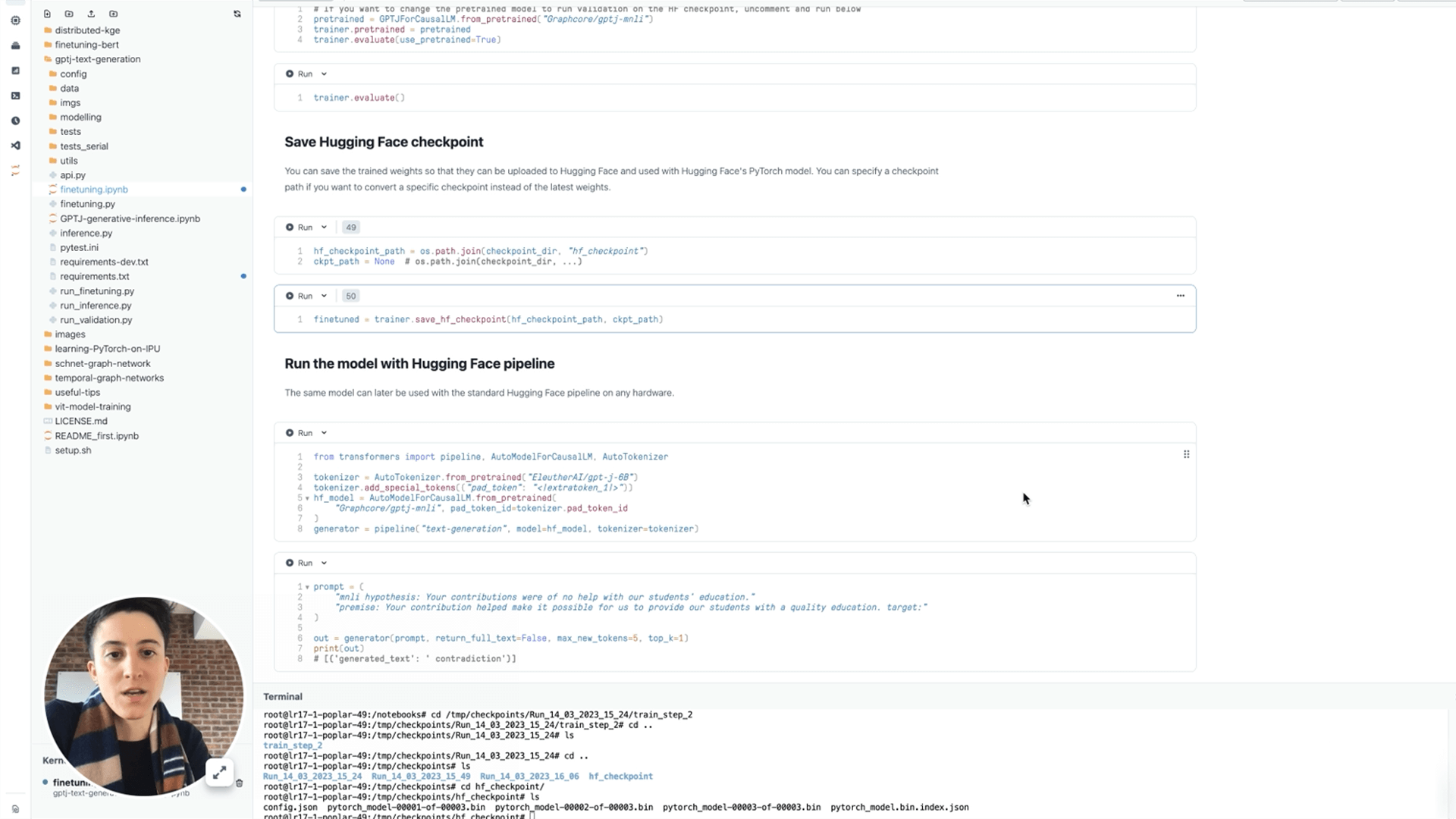

In this walkthrough tutorial, using a Paperspace Gradient Notebook running on Graphcore IPUs, we apply this idea to fine tune GPT-J on Glue MNLI dataset.

The task here is to determine entailment. Which means that given a pair of sentences - a hypotheses and a premise - the model has to determine whether the premise entails the hypothesis, the hypothesis contradicts the premise, or the two sentences are neutral to each other.

In the notebook, you will learn how these tasks can be cast into the text-to-text format, and how you can use our very simple API to fine tune the model on IPUs.

The code is very easily adaptable to other datasets allowing you to fine-tune for a range of applications, including Question Answering, Named Entity Recognition, Sentiment Analysis, and Text Classification.

You can also run GPT-J for text generation inference on a Paperspace Gradient Notebook.

Inference: Text generation on IPU using GPT-J