For more performance results, visit our Performance Results page

Bow Pod16 opens up a new world of machine intelligence innovation.

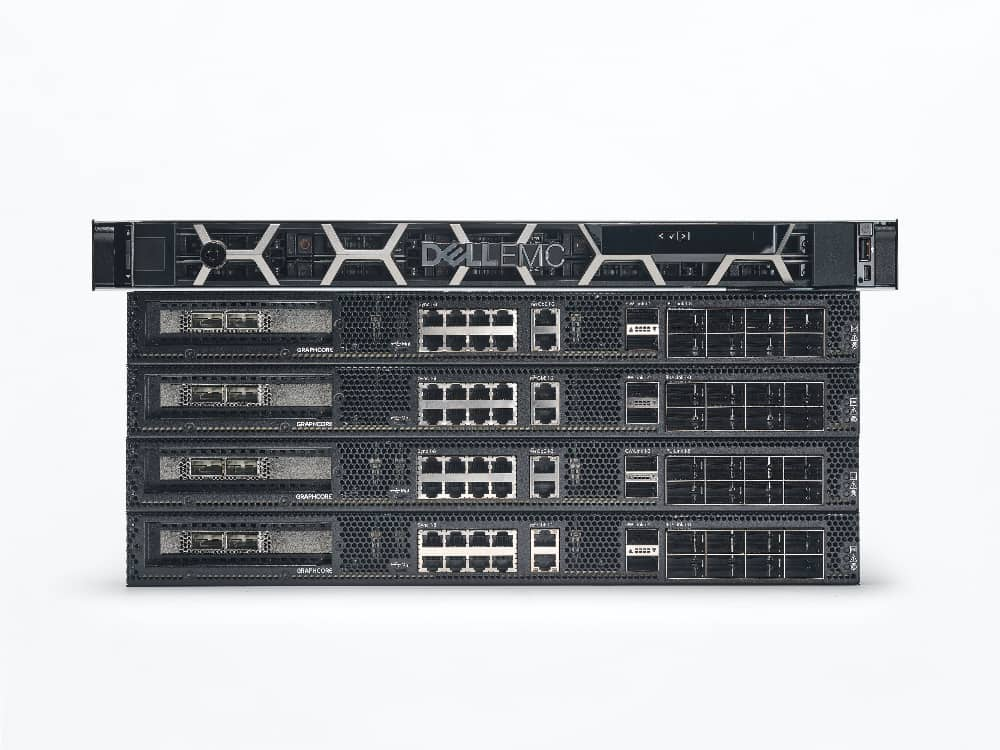

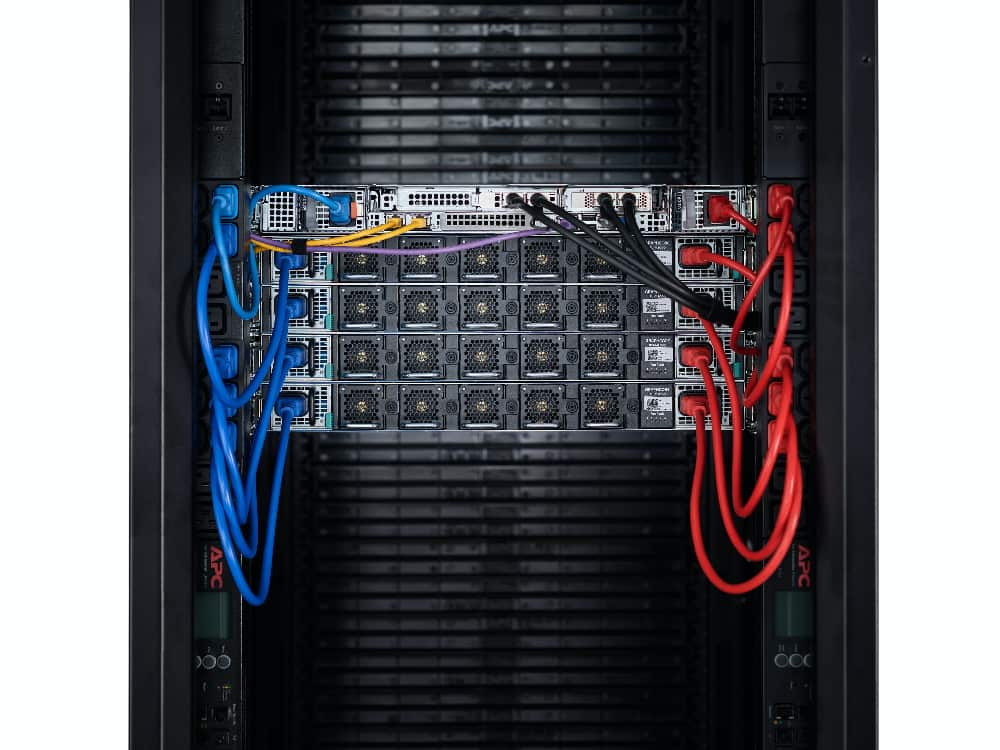

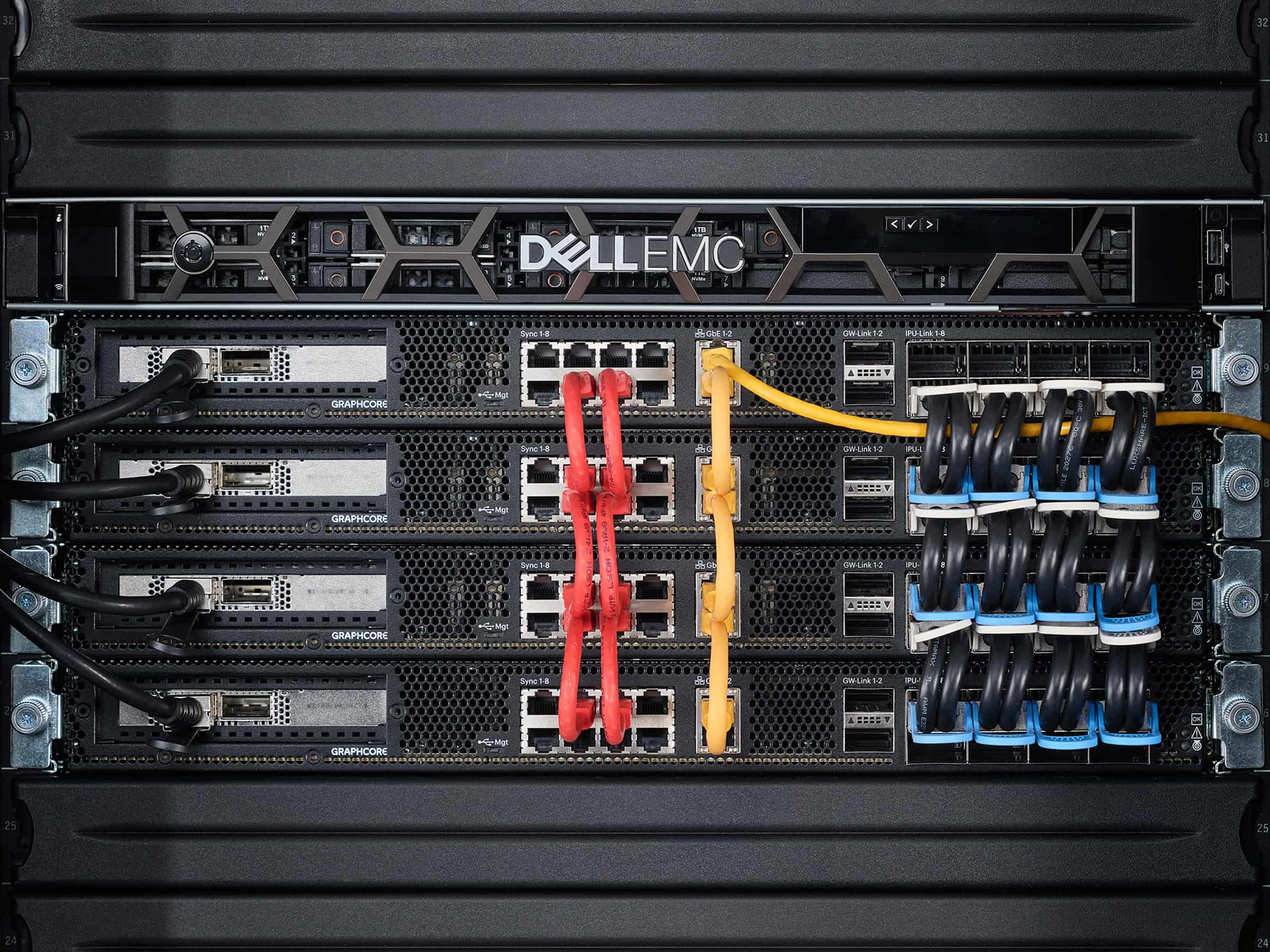

Built from 4 inter-connected Bow-2000s and a pre-qualified host server from your choice of leading technology brands, Bow Pod16 is available to purchase today in the cloud or for your datacenter from our global network of channel partners and systems integrators.

PERFORMANCE

World-class results whether you want to explore innovative models and new possibilities, faster time to train, higher throughput or performance per TCO dollar.

| Processors | 16x Bow IPUs |

| 1U blade unites | 4x Bow-2000 machines |

| Memory |

14.4GB In-Processor-Memory™ Up to 1TB Streaming Memory™ |

| Performance | 5.6 petaFLOPS FP16.16 1.4 petaFLOPS FP32 |

| Separate Cores | 23,552 |

| Threads | 141,312 |

| Host-Link | 100 GE RoCEv2 |

| Software |

Poplar SDK TensorFlow, PyTorch, PyTorch Lightning, Keras, Paddle Paddle, Hugging Face, ONNX, HALO OpenBMC, Redfish DTMF, IPMI over LAN, Prometheus, and Grafana Slurm, Kubernetes OpenStack, VMware ESG |

| System Weight | 66kg + Host server |

| System Dimensions | 4U + Host servers and switches |

| Host Server | Selection of approved host servers from Graphcore partners |

| Storage | Selection of approved systems from Graphcore partners |

| Thermal | Air-Cooled |