Today, Graphcore’s Poplar® SDK is available for developers to access through Docker Hub, with Graphcore joining Docker’s Verified Publisher Program. Together with Docker, we’re distributing our software stack as container images, enabling developers to easily build, manage and deploy ML applications on Graphcore IPU systems.

We continue to enhance the developer experience to make our hardware and software even easier to use. Just over a year ago we introduced a selection of pre-built Docker containers for users. Now, we are making our Poplar SDK, PyTorch for IPU, TensorFlow for IPU and Tools fully accessible to everyone in the Docker Hub community as part of our mission to drive innovation.

Here’s more information on what’s in it for developers and how to get started.

Why Docker is so important to our community

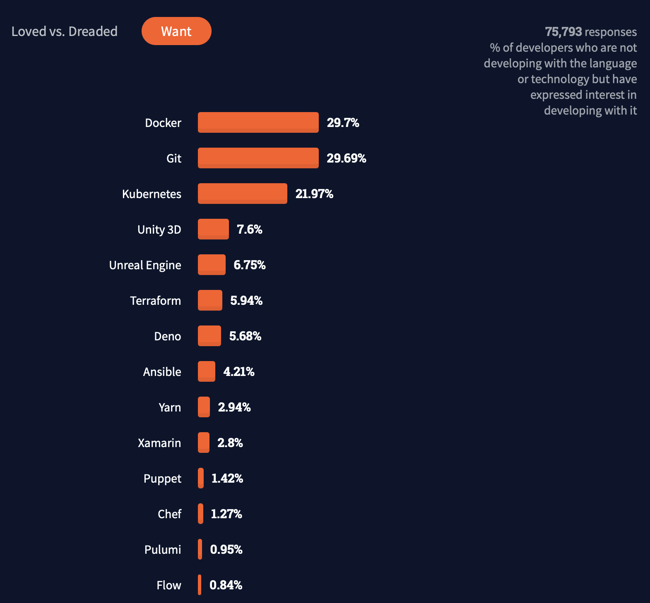

Docker has become the primary source for pulling container images – according to the latest Index Report, there’s been a total of 396 billion all-time pulls on Docker Hub. Furthermore, Docker Hub remains one of the “most wanted, loved and used” developer tools based on the 2021 Stack Overflow Survey answered by 80,000 developers.

For IPU developers, our Docker container images simplify and accelerate application development workflows being deployed in production on IPU systems by supplying pre-packaged runtime environments for applications built using PyTorch, TensorFlow or directly with Graphcore’s Poplar SDK. Containerised applications provide increased portability of applications with consistent, repeatable execution and are an important enabler for many MLOps frameworks.

For IPU developers, our Docker container images simplify and accelerate application development workflows being deployed in production on IPU systems by supplying pre-packaged runtime environments for applications built using PyTorch, TensorFlow or directly with Graphcore’s Poplar SDK. Containerised applications provide increased portability of applications with consistent, repeatable execution and are an important enabler for many MLOps frameworks.

What’s available for developers?

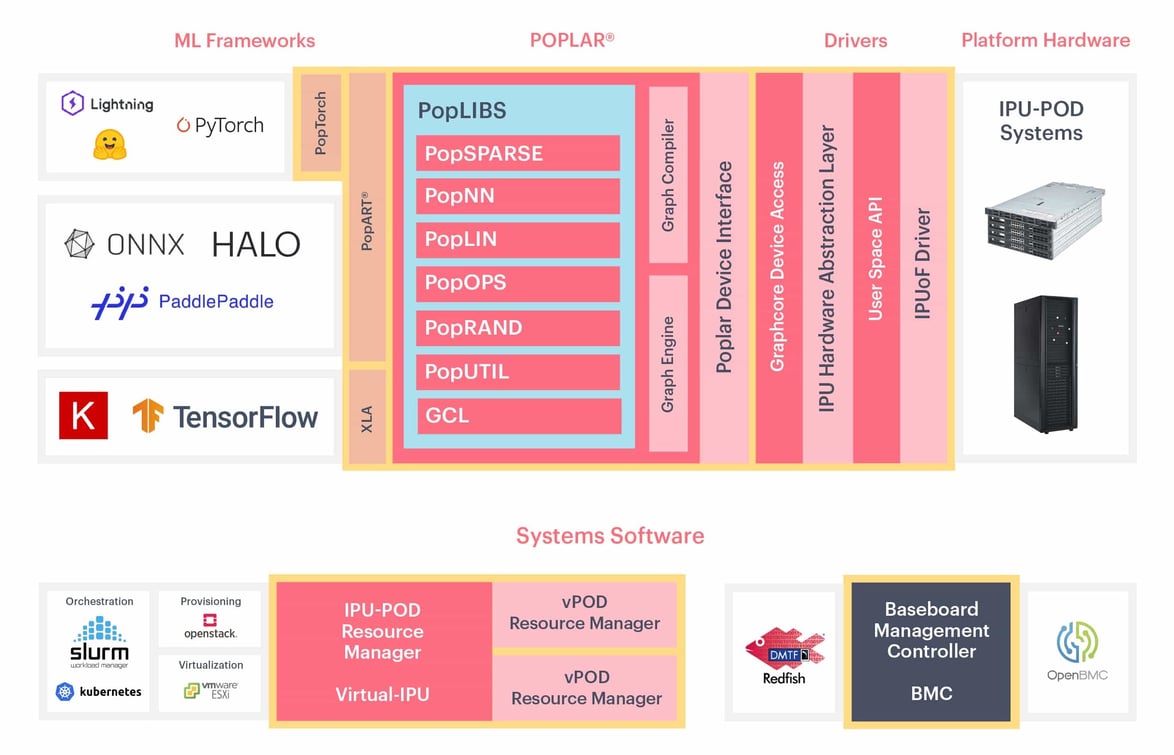

As of today, developers can freely install our Poplar Software stack, co-designed with the IPU (Intelligence Processing Unit), specifically for machine intelligence applications. Poplar is Graphcore’s graph toolchain which sits at the core of our easy-to-use and flexible software development environment fully integrated with standard machine learning frameworks so developers can easily port existing models. For developers who want full control to exploit maximum performance from the IPU, Poplar enables direct IPU programming in Python and C++ through PopART™ (Poplar Advanced Runtime).

Our Poplar SDK images can be pulled via the following repositories:

Our Poplar SDK images can be pulled via the following repositories:

- Poplar SDK – contains Poplar, PopART and tools to interact with IPU devices

- PyTorch for IPU – contains everything in the Poplar SDK repo with PyTorch pre-installed

- TensorFlow for IPU – contains everything in the Poplar SDK repo with TensorFlow 1 or 2 pre-installed

- Tools – contains management and diagnostic tools for IPU devices

And as part of the Docker Verified Publisher Program, Graphcore container images are exempt from rate limiting — meaning that developers have unlimited container image requests for Poplar, regardless of their Docker Hub subscription.

Getting Started with Poplar on Docker

The Poplar Docker containers encapsulate everything needed to run models in IPU in a complete filesystem (i.e. Graphcore’s Poplar® SDK, runtime environment, system tools, configs, and libraries). In order to use these images and run IPU code, you will need to complete the following steps:

1. Install Docker on the host machine

2. Pull Graphcore's Poplar SDK container images from Docker Hub

3. Prepare Access to IPUs

4. Verify IPU accessibility with Docker container

5. Sample App Code on IPUs

Install Docker on the host machine

Docker installation varies based on operating system, version, and processor.

You can follow Docker’s getting started guide.

Pull Graphcore's Poplar SDK container images from Docker Hub

Once Docker is installed, you can run commands to download our hosted images from Docker Hub and run them in the host machine. The Poplar SDK container images can be pulled from the Graphcore Poplar repository on Docker Hub.

There are four repositories and these repositories may contain multiple images based on the SDK version, OS, and architecture.

graphcore/pytorchgraphcore/tensorflowgraphcore/poplargraphcore/tools

Pulling from the framework repo downloads the latest version of the SDK compiled for AMD host processor by default.

To pull the latest TensorFlow image use:

$ docker pull graphcore/tensorflow

If you want to select a specific build for a specific SDK version and processor, you can configure the tags based on Docker Image Tags.

Prepare Access to IPUs

To talk to the IPUs in PODs, we must configure the connection between the host machines and the IPUs - the IPU over Fabric (IPUoF). The information that Poplar needs to access devices can be passed via an IPUoF configuration file which is, by default, written to a directory in your home directory (~/.ipuof.conf.d). The configuration files are useful when the Poplar hosts do not have direct network access to the V-IPU controller (for security reasons, for example).

If you are using Graphcloud, the IPUoF default config file is generated every time a new user is created and added to the POD. Check if there are .conf files inside that folder (e.g. ~/.ipuof.conf.d/lr21-3-16ipu.conf). If you have this setup, you can proceed to the next step.

If not available, you will need to configure Poplar to connect to the V-IPU server by following the V-IPU Guide: Getting Started. Take note to save your IPUoF config file in the folder ~/.ipuof.conf.d to run the scripts in the next section.

Verify IPU accessibility with Docker container

Now that you have the container ready, you can check if the IPU is accessible from inside the container.

List the IPU devices within the context of the container by running the following:

$ docker run --rm --ulimit memlock=-1:-1 --net=host --cap-add=IPC_LOCK --device=/dev/infiniband --ipc=host -v ~/.ipuof.conf.d/:/etc/ipuof.conf.d -it graphcore/tools gc-info -l

Running a sample TensorFlow app

First, get the code from the Graphcore tutorials repository in GitHub.

$ git clone https://github.com/graphcore/tutorials.git

$ cd tutorials

The Docker container is an isolated environment. It will come empty and not have access to the host machine’s file system. In order to use data from your host machine, the data needs to be accessible within the Docker container.

You can make the data accessible by mounting directories as volumes to share data between the host machine and the Docker container environment.

A common pattern when working with a Docker-based development environment is to mount the current directory into the container (as described in Section 2.2, Mounting directories from the host), then set the working directory inside the container with -w <dir name>. For example, -v "$(pwd):/app" -w /app.

To run the mnist example in the TensorFlow container, you can use the following command which mounts the tutorials repo into the docker container and runs it.

$ docker run --rm --ulimit memlock=-1:-1 --net=host --cap-add=IPC_LOCK --device=/dev/infiniband --ipc=host -v ~/.ipuof.conf.d/:/etc/ipuof.conf.d -it -v "$(pwd):/app" -w /app graphcore/tensorflow:2 python3 simple_applications/tensorflow2/mnist/mnist.py

Developer Resources & Links