LabGenius is engaged in AI-driven scientific research that could only have happened at this moment in time, and the cause couldn’t be more important.

Today, the company is focused on accelerating the discovery of advanced treatments for cancer and inflammatory diseases, but the principles can be applied far more widely.

Using a combination of artificial intelligence, synthetic biology and laboratory automation, the London-based biotech company is developing next-generation antibody therapeutics.

The technologies and techniques involved have only recently reached the level of maturity needed for this ambitious undertaking.

So, when IPU systems halved the compute time needed to run crucial AI model training, LabGenius’ researchers realised that they had found a new and important tool in the race to innovate. The team used an off-the-shelf PyTorch version of the Transformer model, BERT, with code freely available on Graphcore’s GitHub site, making it easy to use.

"Previously we used GPUs and it took us about a month to have a functioning model of all the proteins that are out there. With Graphcore, we reduced the turnaround time to about two weeks, so we can experiment much more rapidly, and we can see the results quicker,” said Dr Katya Putintseva, a Machine Learning Advisor to LabGenius.

The protein problem

Finding, or designing proteins with precisely the right qualities to treat medical conditions is notoriously complex. Only in the last couple of years have we started to see the first AI-designed small molecule enter clinical trials, marking this new era of drug discovery.

Even with protein design technologies, knowing how to adjust a protein’s constituent amino-acids precisely to improve its function is a huge challenge — beyond the scope of humans on their own, extremely difficult even with the help of conventional computation, but a problem well suited to artificial intelligence.

To exploit this new technology, LabGenius is creating an automated, closed loop system for managing experimental iteration and the back-and-forth between biological experimentation and machine learning-powered decision-making. Proteins are sequenced, intelligently analysed, modified and re-synthesized in the search for the perfect protein recipe.

Beautiful data

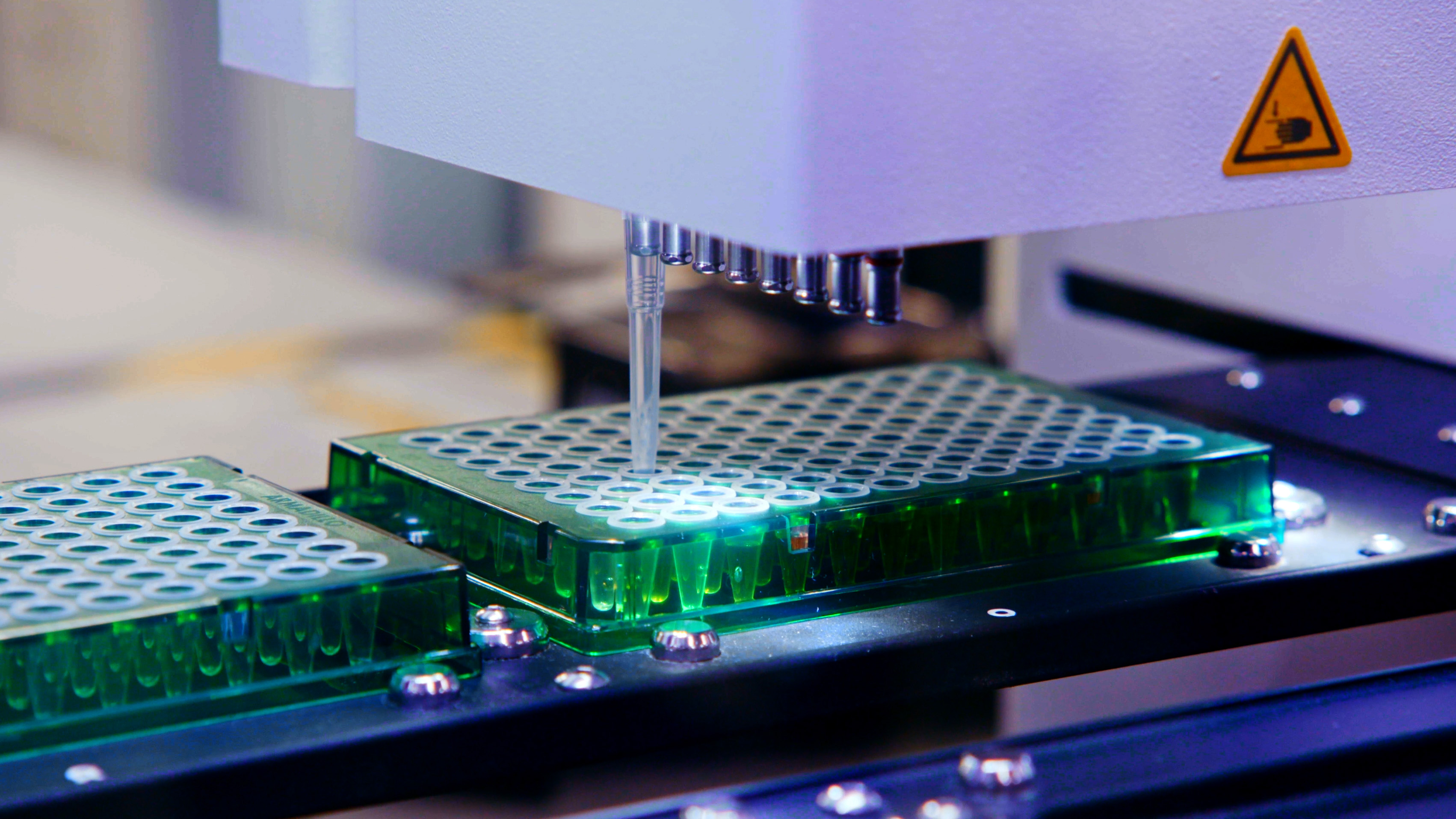

Visitors to LabGenius’ laboratories can see the physical part of the process in action, as liquid handling machines fill sample trays, which are picked up by robot arms and whisked away to the next stage of experimentation.

It is here that wet lab experimentation meets data science.

“The biggest problem of any biological challenge within the AI space, if you compare it to natural language processing or image recognition, is the scarcity of high-quality data that is representative enough of the features of interest,” explains Dr Putintseva.

“You can find a lot of data out there, but the devil is in the details. How was that dataset generated? What biases does it contain? How far can the signal extracted from it be extrapolated within the sequence space?”

LabGenius’ robotic platform produces and characterises the right sort of data, at the quality needed for its machine learning models.

“We believe that now is the time for high quality, beautiful datasets to be generated in biology,” says Dr Putintseva.

Optimise and suggest

Using its carefully curated, high-quality datasets LabGenius is able to apply artificial intelligence to solve two of the great challenges of novel protein therapy development.

The first is a classic AI problem: how to optimise many variables within highly complex systems.

“We call [this] co-optimization or multi-objective optimization,” says Tom Ashworth, LabGenius’ Head of Technology.

“You might be trying to optimise potency, which might be about the molecule’s affinity, how sticky it is to its target, but at the same time you don’t want to destroy its safety or perhaps some other characteristic like its stability.”

AI also informs how LabGenius iterates its experiments.

“[The system] is looking across different features we could change about the molecule — from point mutations of simpler constructs to the overall composition and topology of multi-module proteins. It’s making suggestions about what to design next... to learn about a change in the input and how that maps to a change in the output,” said Tom.

Biological BERT

LabGenius uses Graphcore IPU compute in the Cirrascale IPU cloud to accelerate its training of BERT — the transformer model, best known for natural language processing, now finding an increasingly broad range of applications, including in biotech.

LabGenius researchers take a large body of known proteins and ask BERT to predict masked amino acids from training data, effectively learning the basic biophysics of proteins, according to Dr Putintseva: “Because it does that, the hidden values of that model help us to generate meaningful representation of proteins that we subsequently use to map out the feature of interest.”

LabGenius researchers used Graphcore’s standard PyTorch implementations of BERT available on GitHub. With minimum need for code modifications, they were able to focus their attention on ensuring appropriateness of the dataset for the job in hand.

The fact that Graphcore IPUs were able to cut the training time so dramatically, on a model that needs to be repeatedly re-trained, hands LabGenius a substantial advantage in a competitive industry, according to Tom Ashworth.

“As a startup, how fast we can move, how fast we can iterate, is central to everything.”

“Graphcore has changed what we’re able to do, accelerating our model training time from weeks to days. For our data scientists, that’s really transformative. They can move much more at the speed they think. For us, that’s incredibly valuable,” said Tom.

LabGenius is now looking to expand its use of Graphcore-trained BERT models, including further use within the discovery phase, as well as understanding the developability of its molecules. In addition, it is starting to explore building new AI models on Graphcore systems, including GNNs (Graph Neural Networks) where the IPU has an innate architectural advantage.