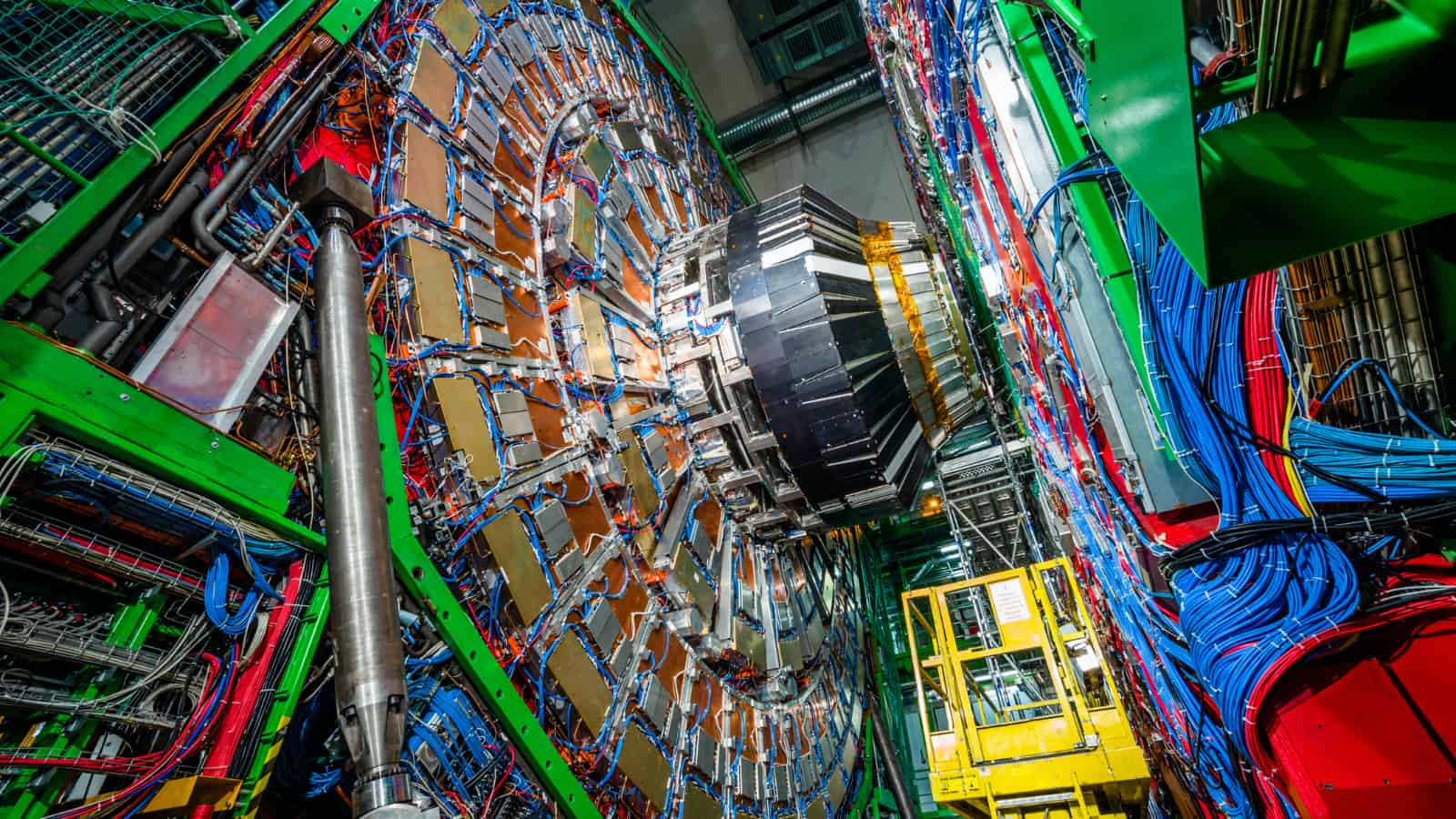

UNLEASH NEW BREAKTHROUGHS

Scientists are increasingly looking to AI methods to address their data processing challenges. IPU hardware empowers researchers to think beyond what they can achieve with today’s CPUs and GPUs and instead come up with the best possible algorithm to solve their problem.

With 59.4Bn transistors and more FP32 compute than any other processor, the IPU is a massively parallel processor built to run AI workloads efficiently. Many of today’s leading researchers are seeing impressive results when using IPUs for their own scientific experiments.